Support vector machines explained

Support vector machines in simple terms are a form of machine learning classification that uses a line that marks the boundary between the classes of the data points.

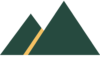

The boundary, called the decision boundary, perfectly splits the area between the closest data points of the classes.

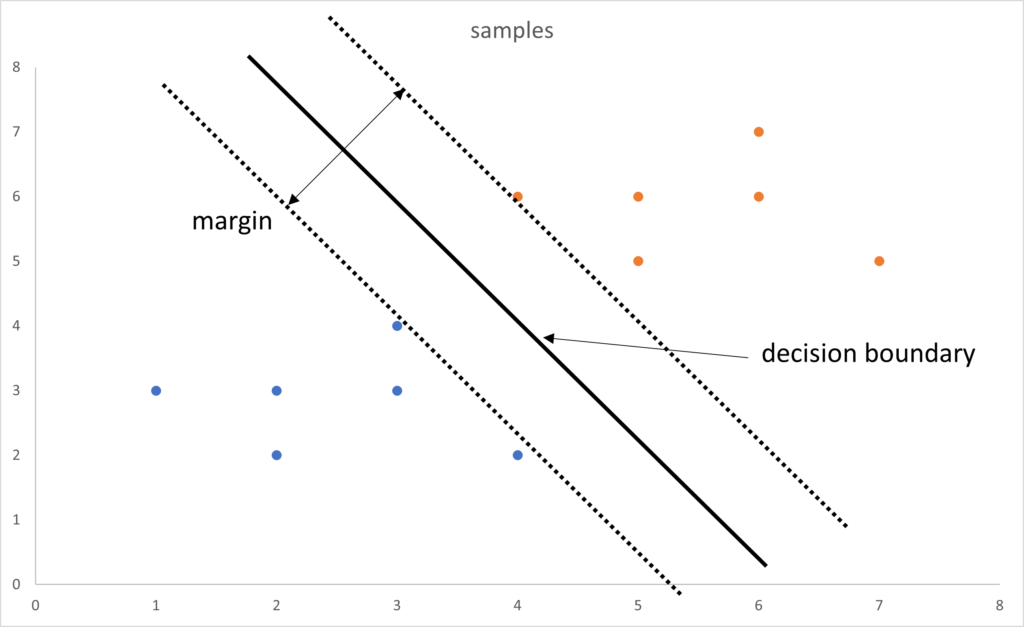

The closest data points from each class are called support vectors and the gap between them is the margin.

In this example we have twelve data points that can be split by a line between the two classes (blue and orange).

We can now use this line to classify any new points as either in the blue class or the orange class, depending on which side of the line it is.

The best line, called the decision boundary, that can determine the class of a data point is the widest margin between the closet points of each class. These points are called the support vectors.

To calculate the position of the decision boundary it is necessary to calculate the maximum margin between the support vectors, the closest data points from the two classes. This can be achieved using a classifier such as a maximum margin classifier.

To represent data points with two variables we can separate these points with a line, if there are more variables, also called features, then it is said we have more dimensions.

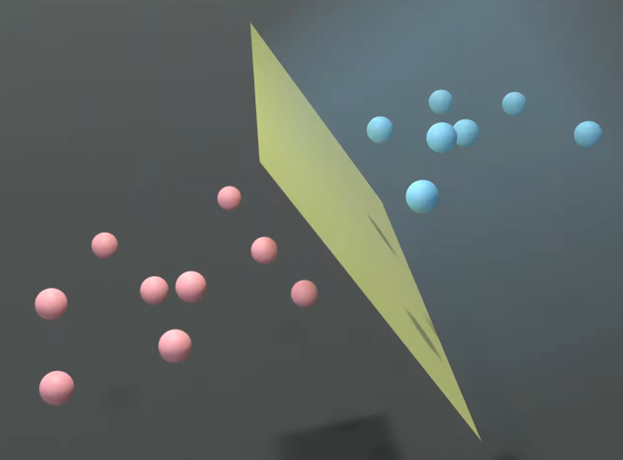

To separate points where there are more than two dimensions then the data points are separated by a hyperplane.

When we are able to easily separate the data points into their classes and create the decision boundary and the maximum margin without any cases that are within the margin, it is known as a hard margin.

In some cases the data can not be separated by a line, there are cases where there are data points within the boundary, possibly of the other class. In this case we can use a soft margin.

Kernel Trick

In some cases the classes will not be separable with data points either side of any decision boundary.

There is a technique to create a margin called the kernel trick.

The kernel trick in simple terms is the creation of a different view, or dimension, in which all the points in one class are separate from the data points in the other class.

Because there is a gap or margin between the data points in each class the decision boundary can be created.

Imagine if the blue points are birds traveling one way and the orange points are birds traveling in the opposite direction.

As the birds cross a person looking up could not separate the two flock of birds.

But if we could look at the height of the two flocks of birds we could easily separate the birds represented by the blue points and the birds seen as the orange points.

In this example, we can think of the view of the birds from the ground as a two dimensional view. but once we add their height off the ground then we are using three dimensions.

This is the kernel trick.